On Thursday, Meta announced the deployment of advanced AI systems aimed at enhancing content enforcement across its platforms. This strategic move marks a shift towards reducing reliance on third-party vendors, with the goal of more effectively managing content related to terrorism, child exploitation, drug-related activities, fraud, and scams.

The company plans to roll out these AI systems as they demonstrate superior performance compared to existing content moderation methods. Meta emphasizes that while human reviewers will still play a role, these AI technologies are designed to handle tasks that are better suited for automation, such as repetitive evaluations of graphic content and adapting to the evolving tactics of malicious actors.

Meta's early tests reveal promising results, with AI systems capable of detecting violations of adult sexual solicitation content at rates twice as effective as human review teams, while also decreasing the error rate by over 60%. Furthermore, these systems can identify and prevent impersonation attempts involving high-profile individuals and assist in preventing account takeovers by monitoring unusual login activities and profile changes.

The AI systems are also equipped to recognize and mitigate approximately 5,000 scam attempts daily, where fraudsters attempt to deceive users into revealing their login information.

In a blog post, Meta highlighted the importance of human oversight, stating, "Experts will design, train, oversee, and evaluate our AI systems, measuring performance and making the most complex decisions." This ensures that critical decisions, such as appeals for account disablement or reports to law enforcement, remain under human jurisdiction.

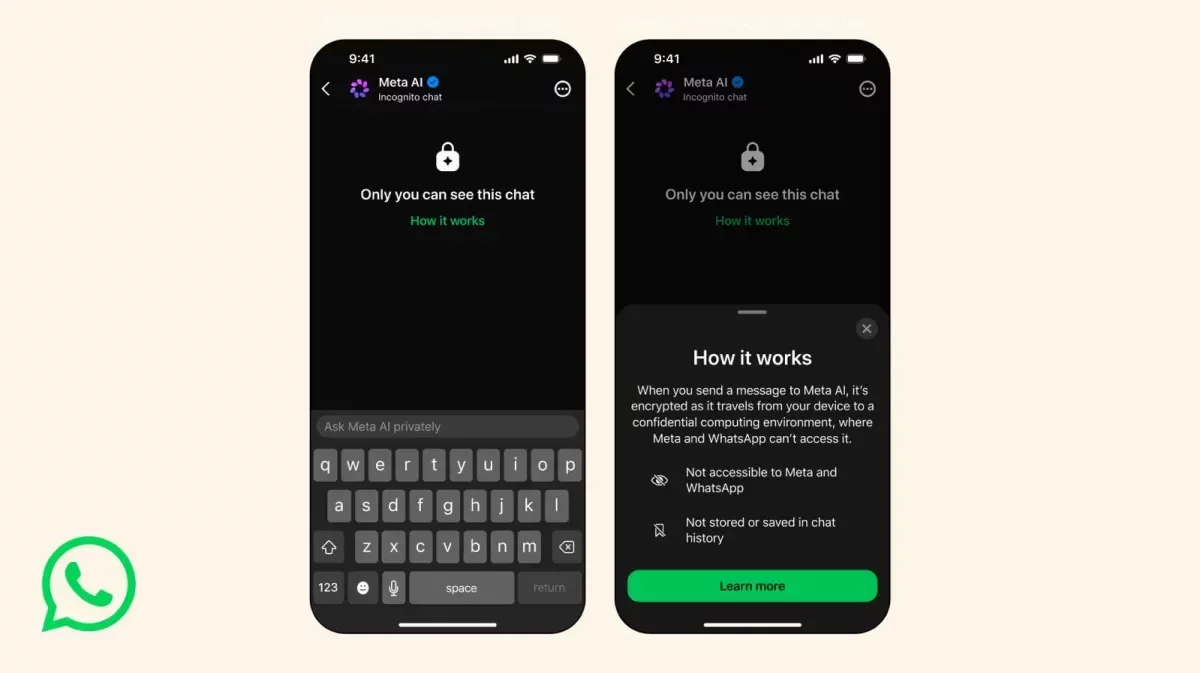

Additionally, Meta introduced a new Meta AI support assistant, offering users 24/7 assistance across Facebook and Instagram on both mobile and desktop platforms, enhancing the overall user experience.

This initiative aligns with Meta's ongoing efforts to refine its content moderation strategies, as it adapts to the changing landscape of digital communication. By leveraging advanced AI technology, Meta aims to create a safer online environment while maintaining a balance between automation and human oversight.

As technology continues to evolve, the integration of AI in content moderation could significantly enhance online safety and user experience, paving the way for more responsible digital interactions in the future.