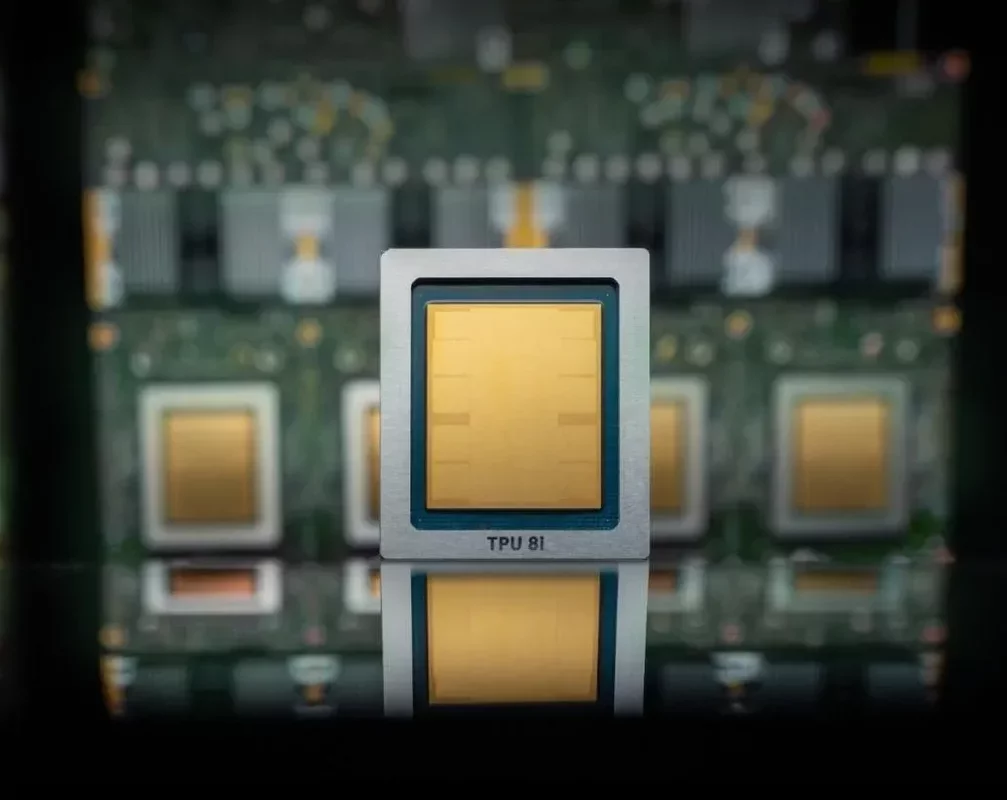

On Wednesday, Google Cloud revealed its latest advancements in artificial intelligence with the launch of its eighth generation of custom-designed AI chips, known as tensor processing units (TPUs). This new generation features two distinct models: the TPU 8t, optimized for model training, and the TPU 8i, which focuses on inference, the process that occurs after users submit prompts.

The company boasts remarkable performance enhancements for these new TPUs compared to previous versions, claiming they can achieve up to three times faster AI model training, an 80% increase in performance per dollar, and the capacity to operate over one million TPUs within a single cluster. This means users can expect significantly more computational power with reduced energy consumption and lower costs.

However, Google's strategy does not represent a direct challenge to Nvidia's dominance just yet. Like other major cloud providers, including Microsoft and Amazon, Google is integrating these new chips into its existing infrastructure, which still relies on Nvidia-based systems. Notably, Google has also committed to offering Nvidia's latest chip, Vera Rubin, later this year.

Looking ahead, the trend of hyperscale companies like Google, Amazon, and Microsoft developing their own AI chips could shift the landscape. As enterprises increasingly migrate their AI workloads to these cloud platforms, there may come a time when reliance on Nvidia diminishes.

Despite the potential for competition, Nvidia remains a formidable player in the chip market, currently valued at nearly $5 trillion. Industry analysts, including Patrick Moore, have previously speculated about the impact of Google's TPUs on Nvidia and Intel, but the reality has proven different as Nvidia continues to thrive.

Interestingly, Google has also announced a collaboration with Nvidia to enhance computer networking capabilities, aiming to optimize the performance of Nvidia-based systems within its cloud. This partnership focuses on improving the software-based networking technology called Falcon, which Google developed and open-sourced in 2023 through the Open Compute Project.

As these technological advancements unfold, the future of AI infrastructure appears promising, with increased efficiency and performance paving the way for innovative applications across various sectors.